Chat with an local LLM that can respond with information from your files, folders and websites on your Mac without installing any other software. All conversations happen offline, and your data is saved locally.

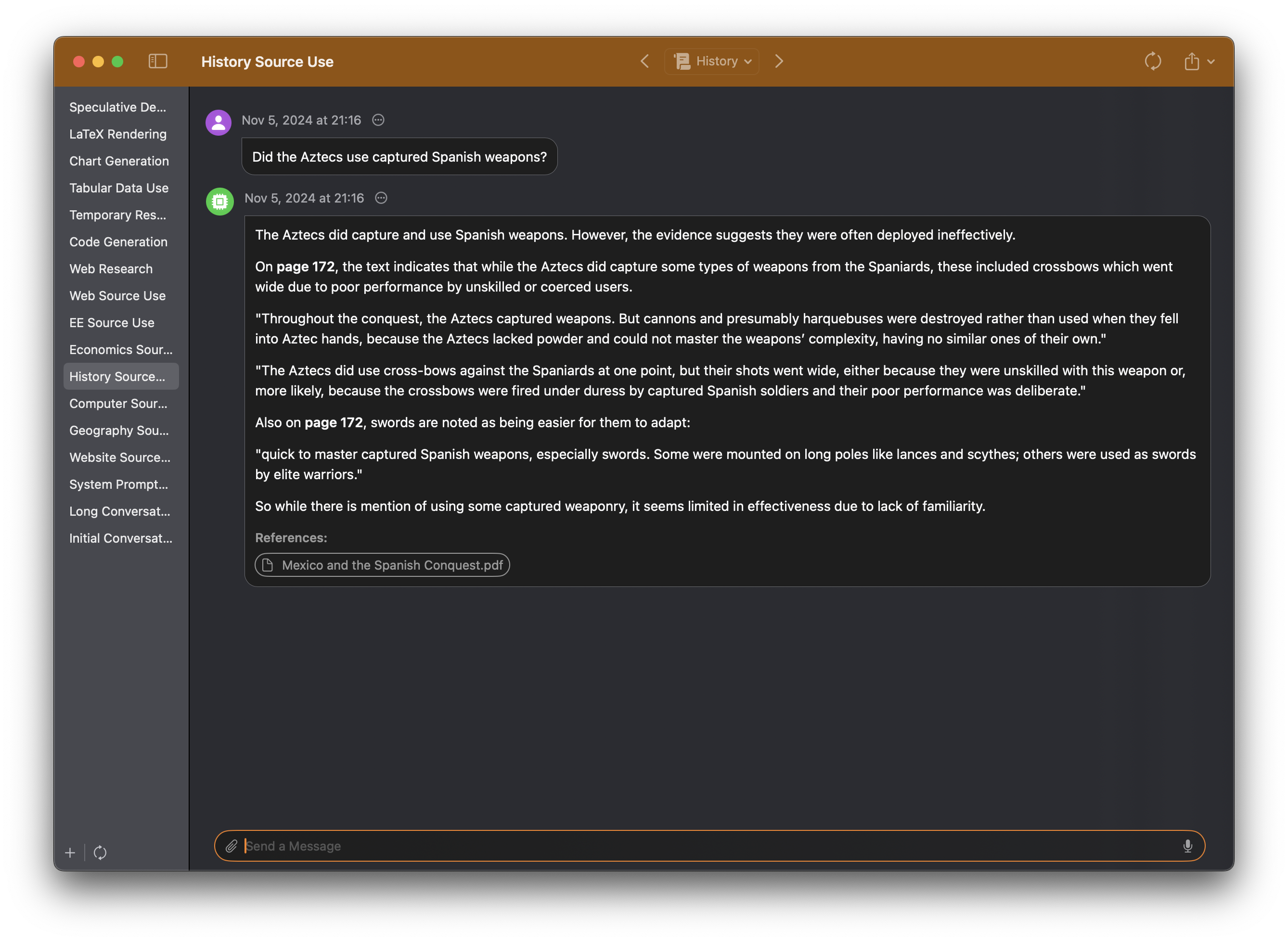

Let’s say you're collecting evidence for a History paper about interactions between Aztecs and Spanish troops, and you’re looking for text about whether the Aztecs used captured Spanish weapons.

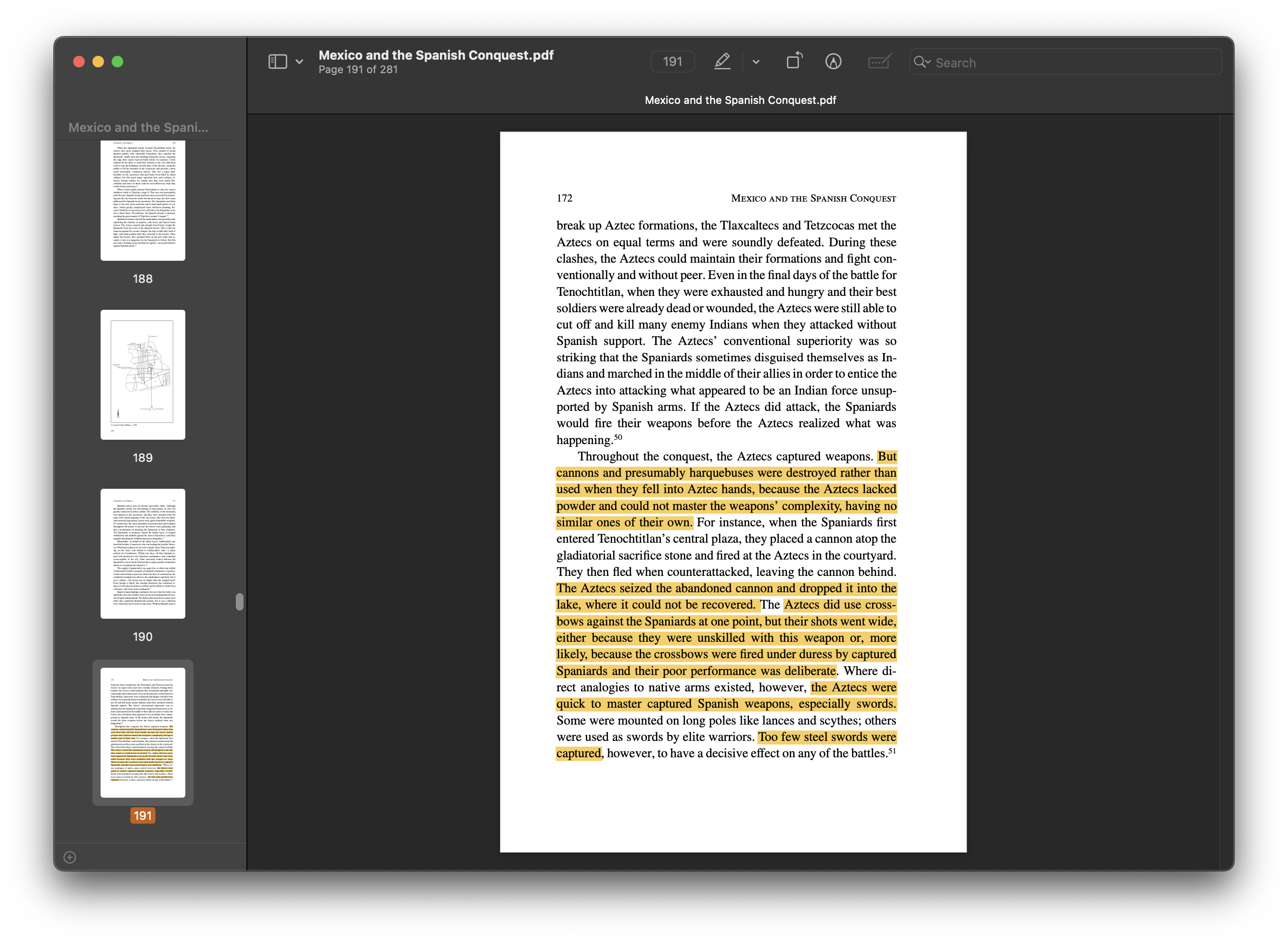

Here, you can ask Sidekick, “Did the Aztecs use captured Spanish weapons?”, and it responds with direct quotes with page numbers and a brief analysis.

To verify Sidekick’s answer, just click on the references displayed below Sidekick’s answer, and the academic paper referenced by Sidekick immediately opens in your viewer.

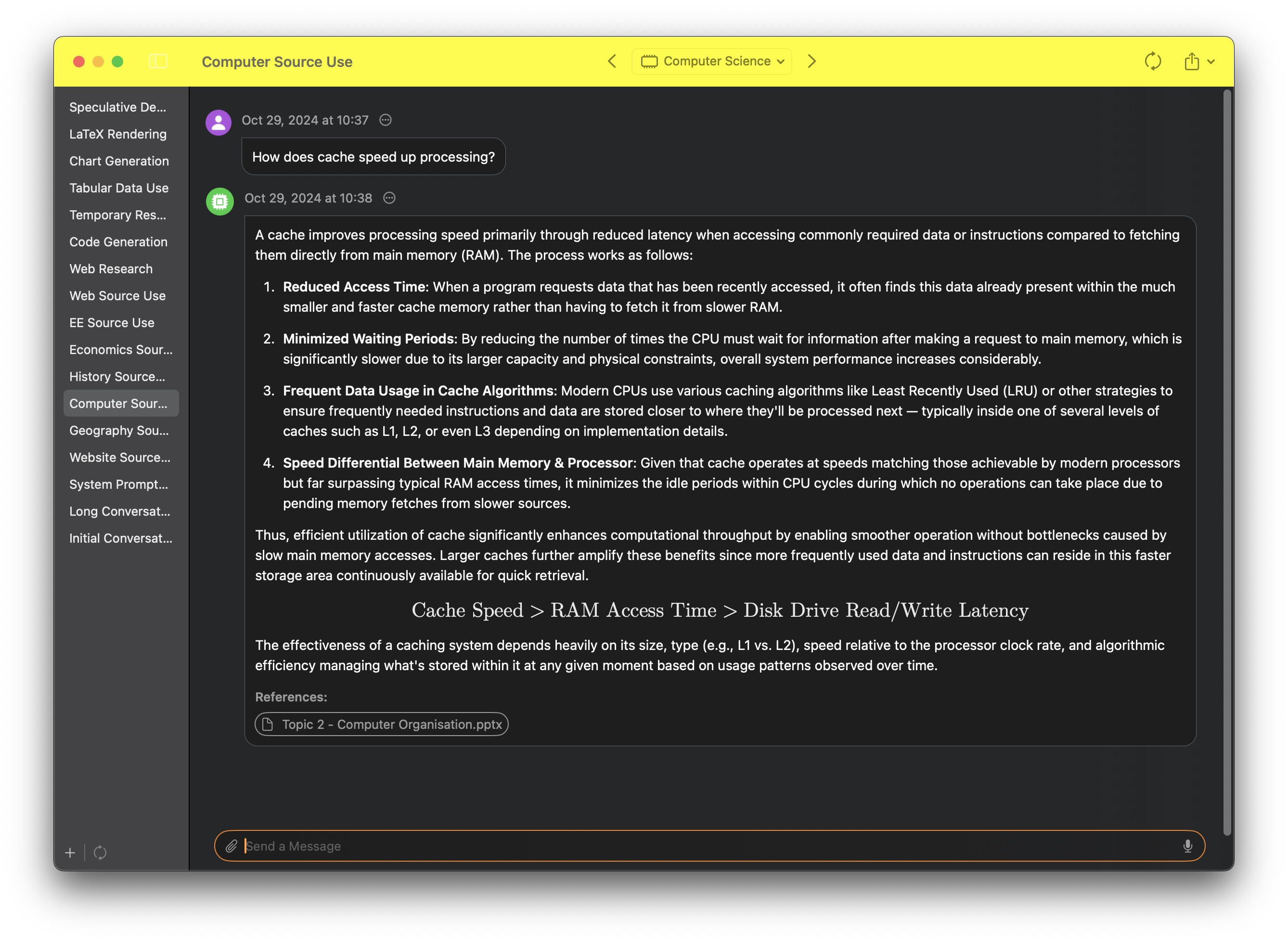

Sidekick accesses files, folders, and websites from your profiles, which can be individually configured to contain resources related to specific areas of interest. Activating a profile allows Sidekick to access the relevant information.

Because Sidekick uses RAG (Retrival Augmented Generation), you can theoretically put unlimited resources into each profile, and Sidekick will still find information relavant to your request to aid its response. This is in sharp contrast to most services, including OpenAI's ChatGPT, which can only ingest ~45 pages of text.

For example, a student might create the profiles English Literature, Mathematics, Geography, Computer Science and Physics. In the image below, he has activated the profile Computer Science, allowing Sidekick to reply with information from that profile.

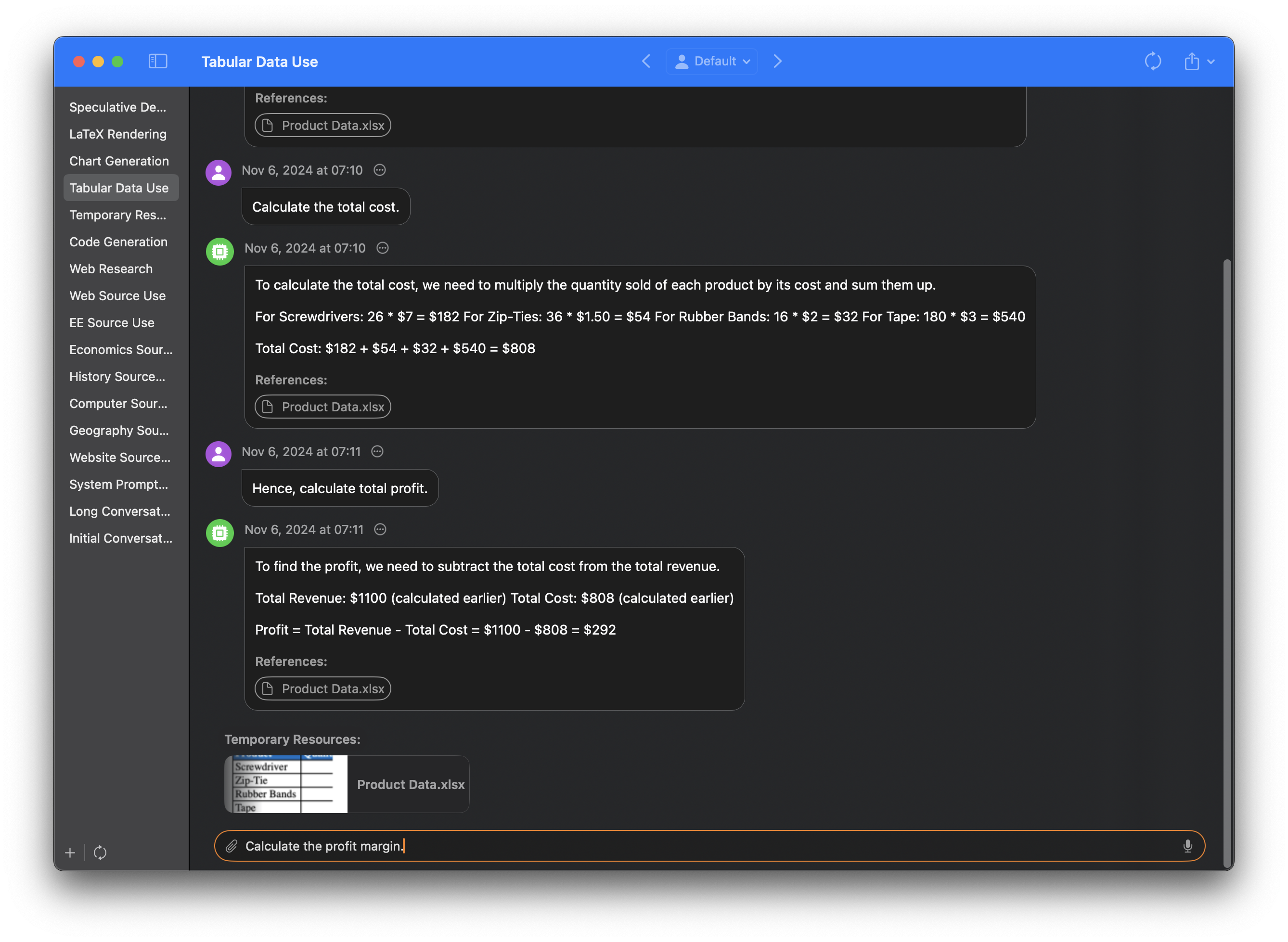

Users can also give Sidekick access to files just by dragging them into the input field.

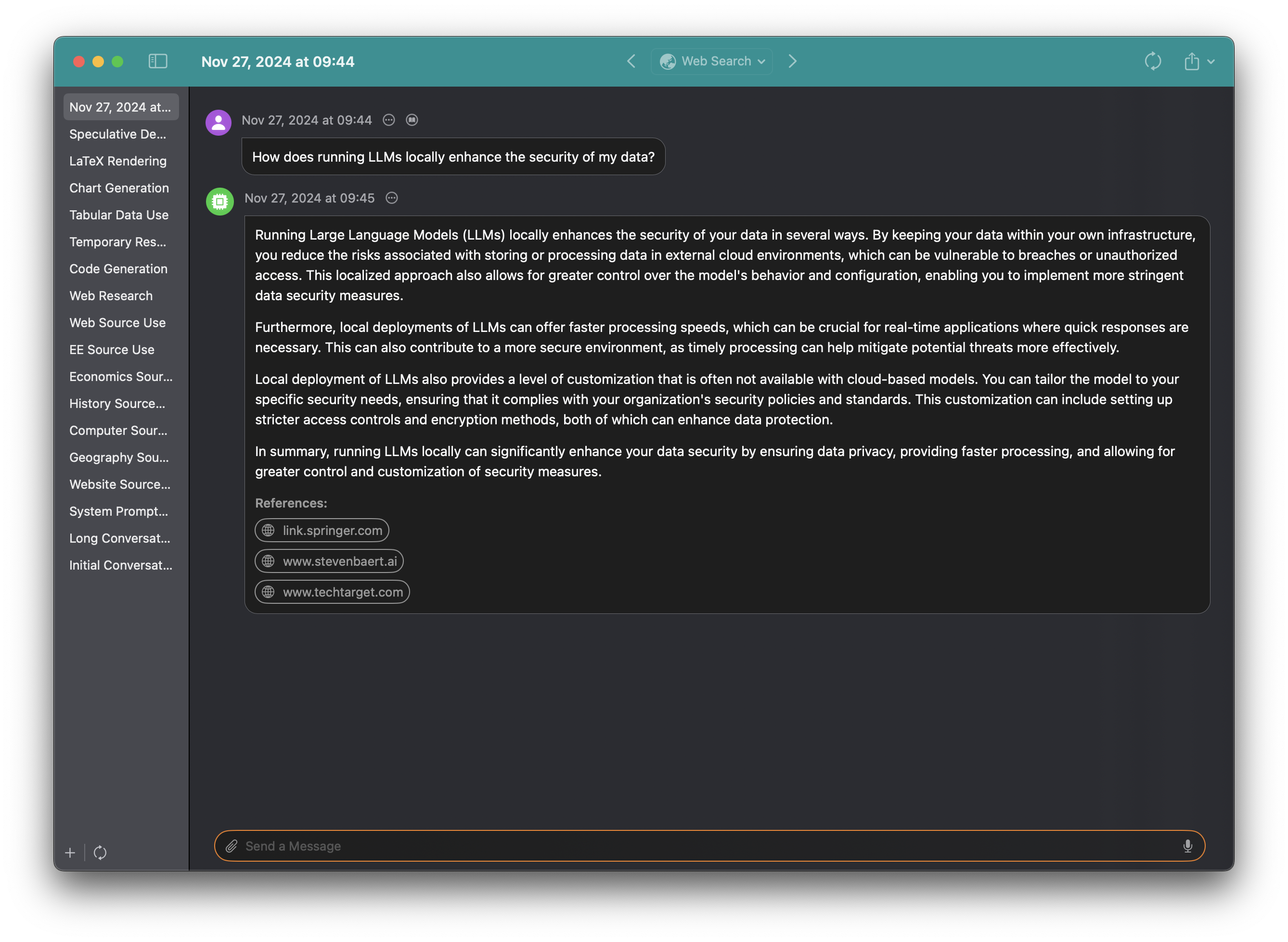

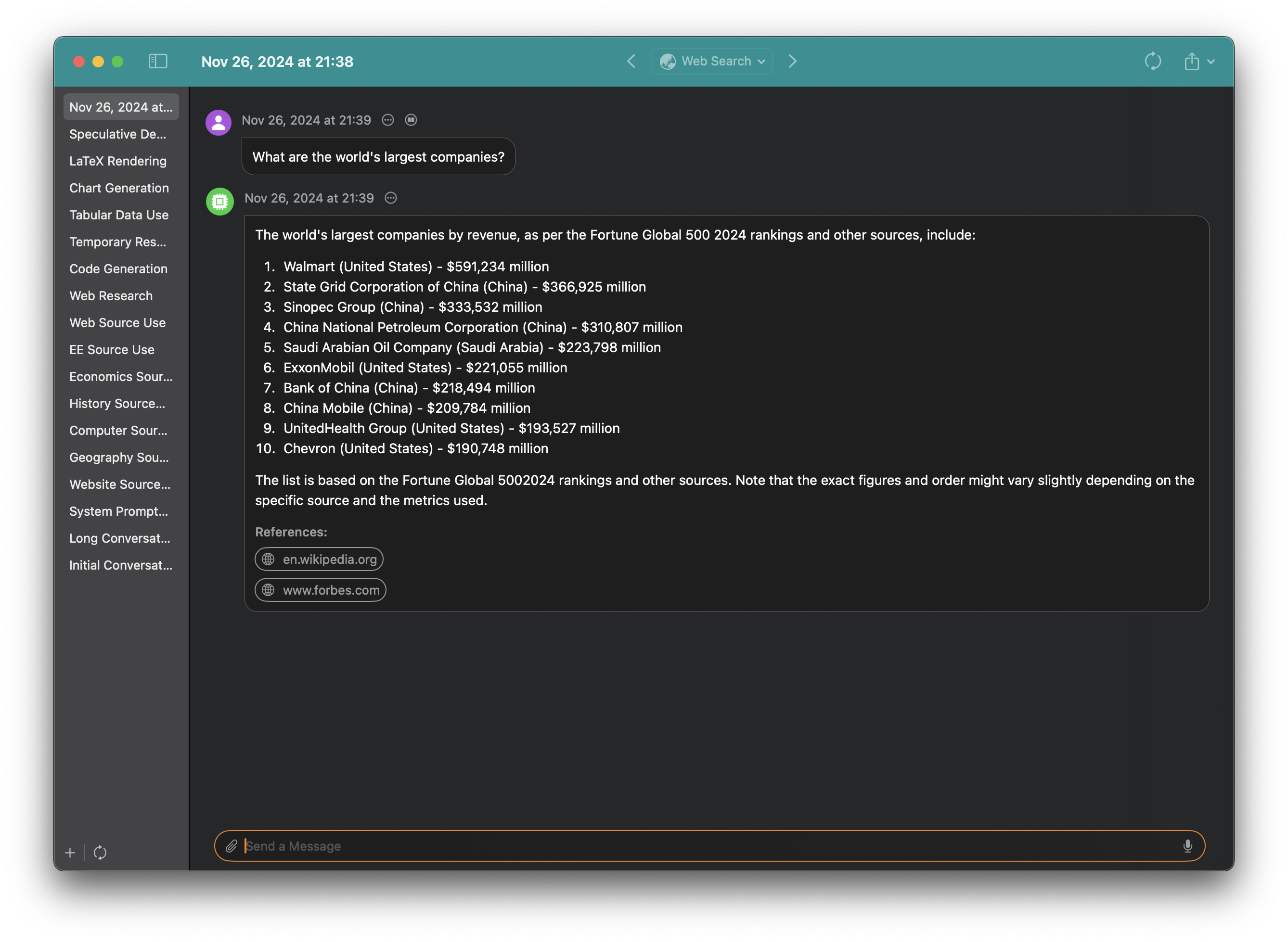

Sidekick can even respond with the latest information using web search, speeding up research.

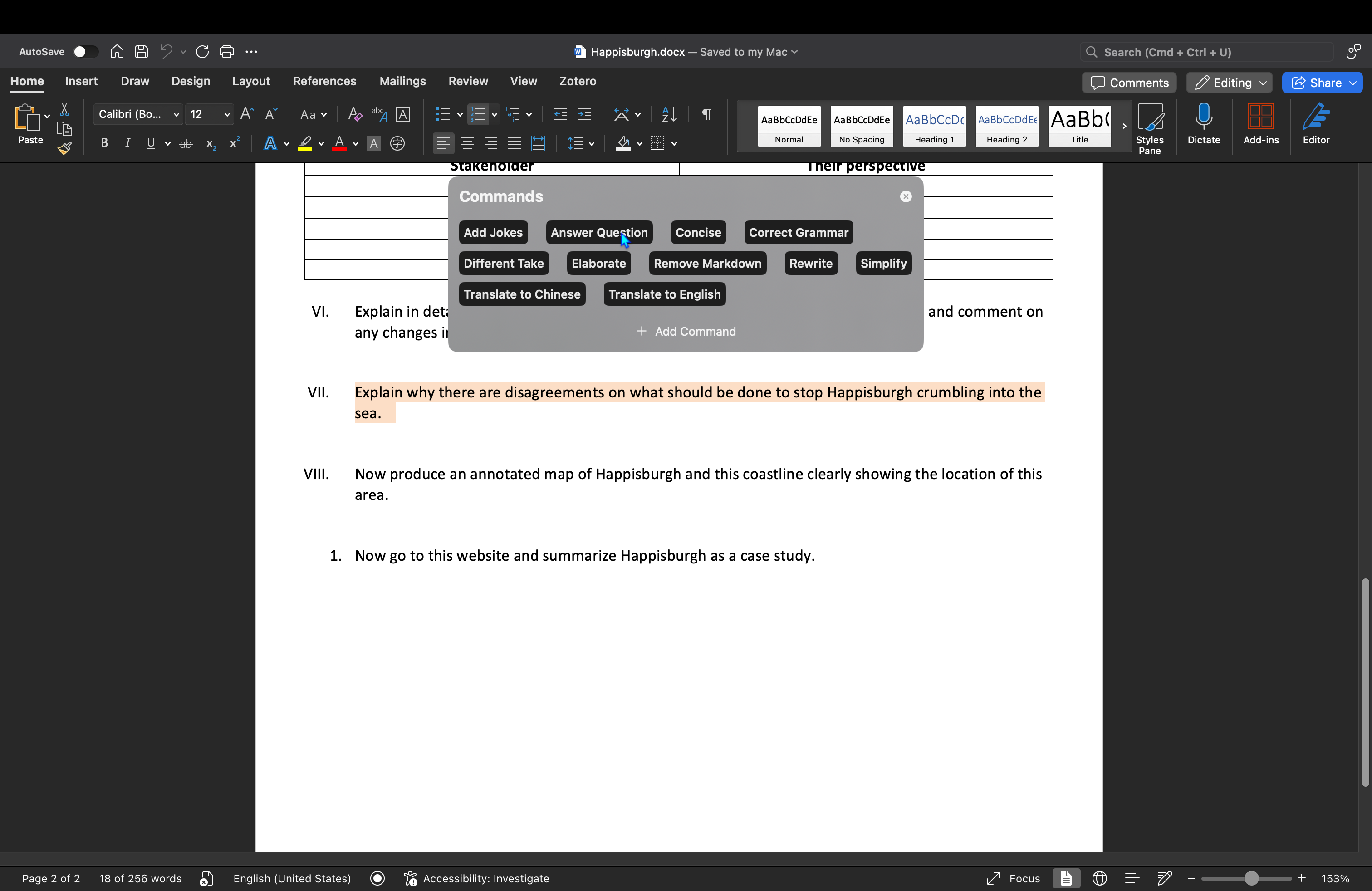

Press Command + Control + I to access Sidekick's inline writing assistant. For example, use the Answer Question command to do your homework without leaving Microsoft Word!

Markdown in rendered beautifully in Sidekick.

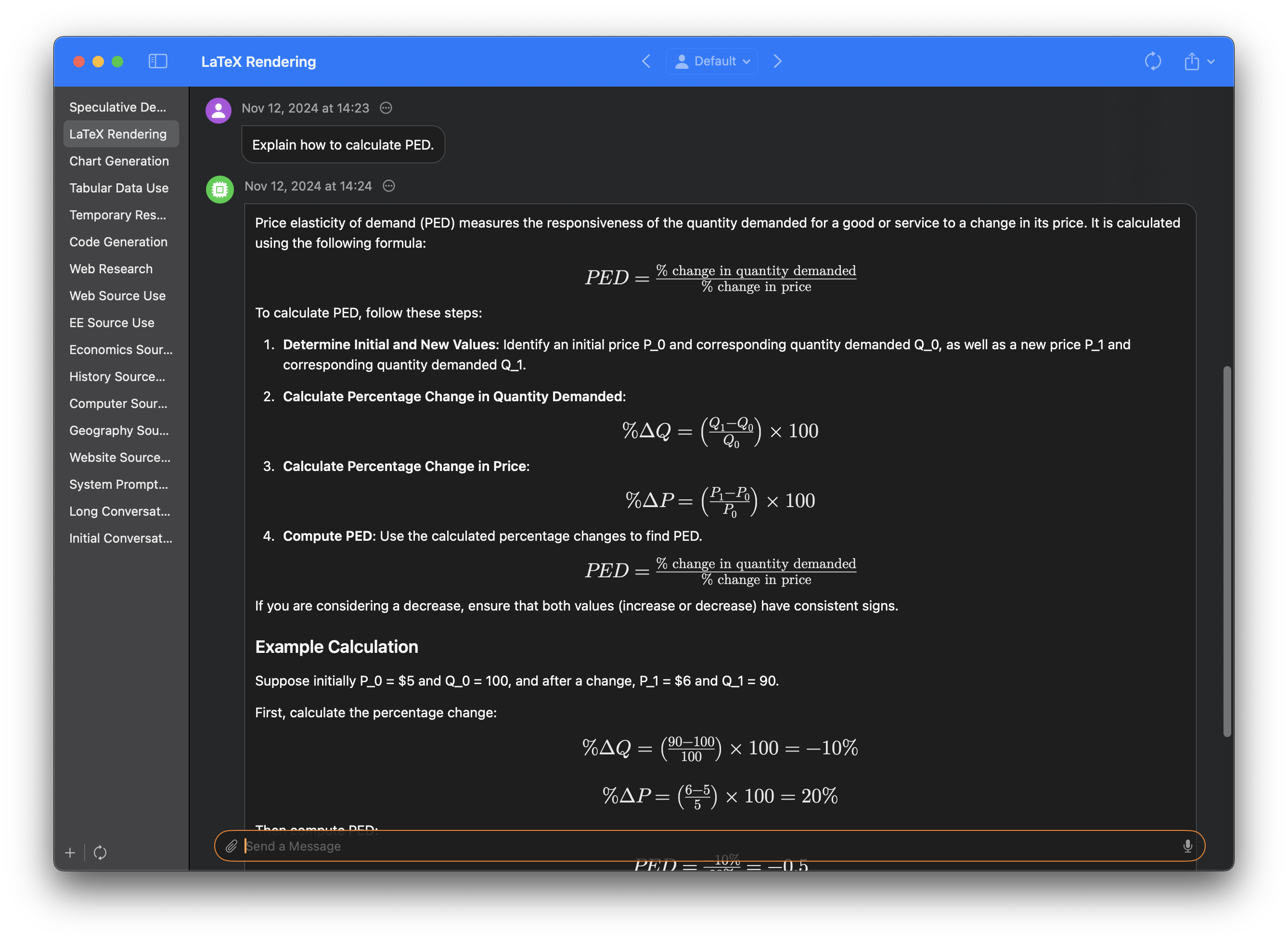

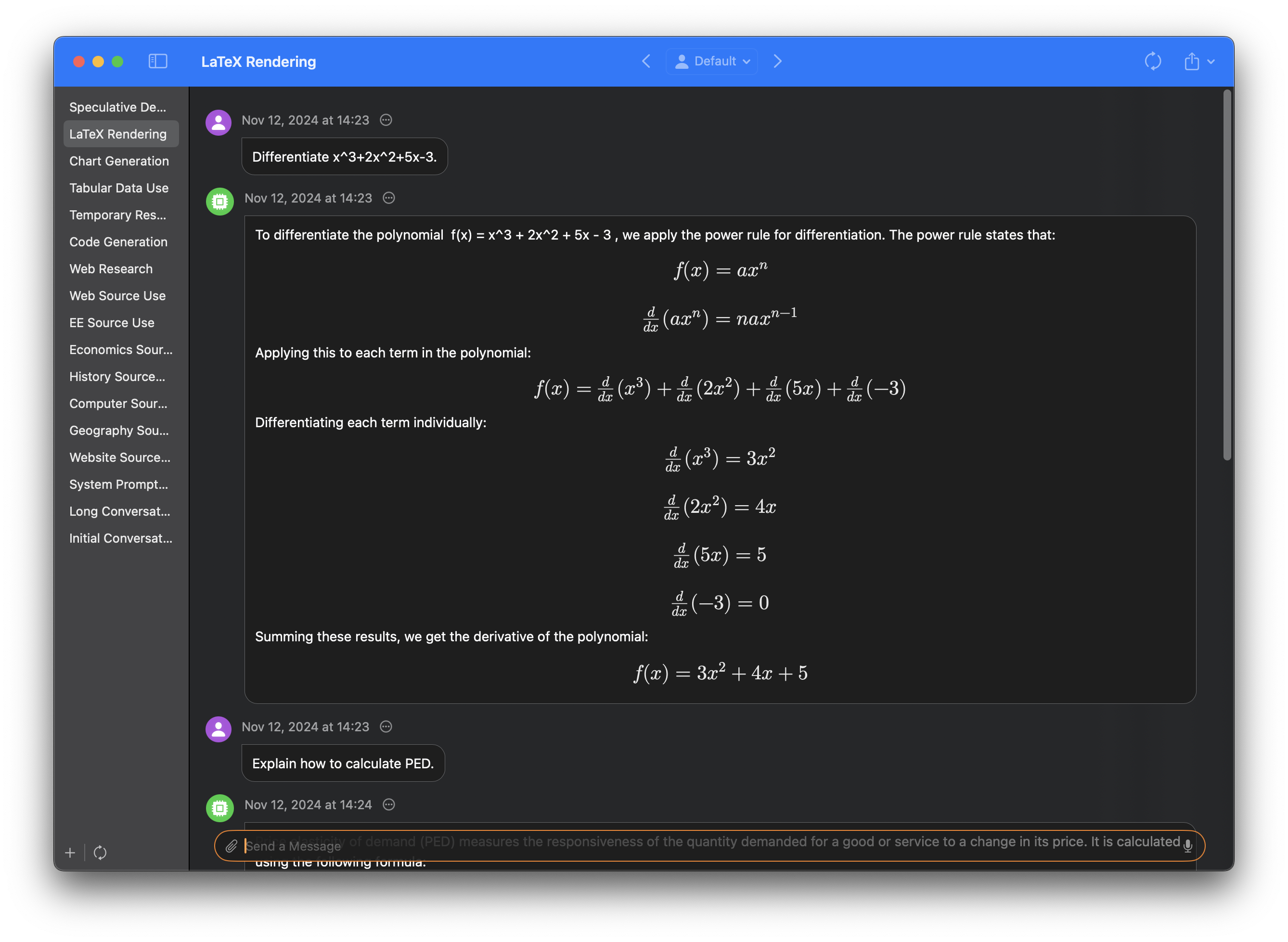

Sidekick offers native LaTeX rendering for mathematical equations.

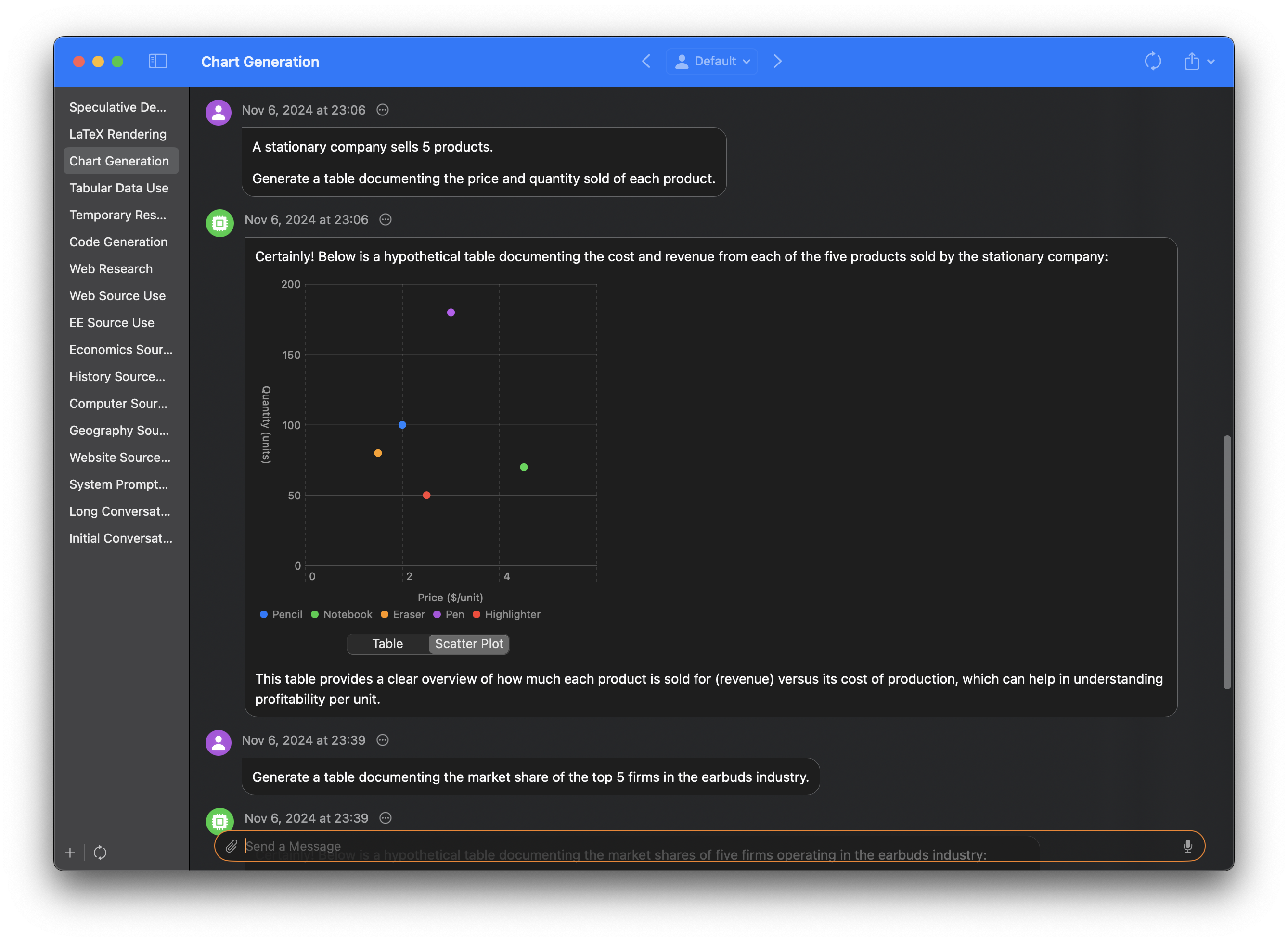

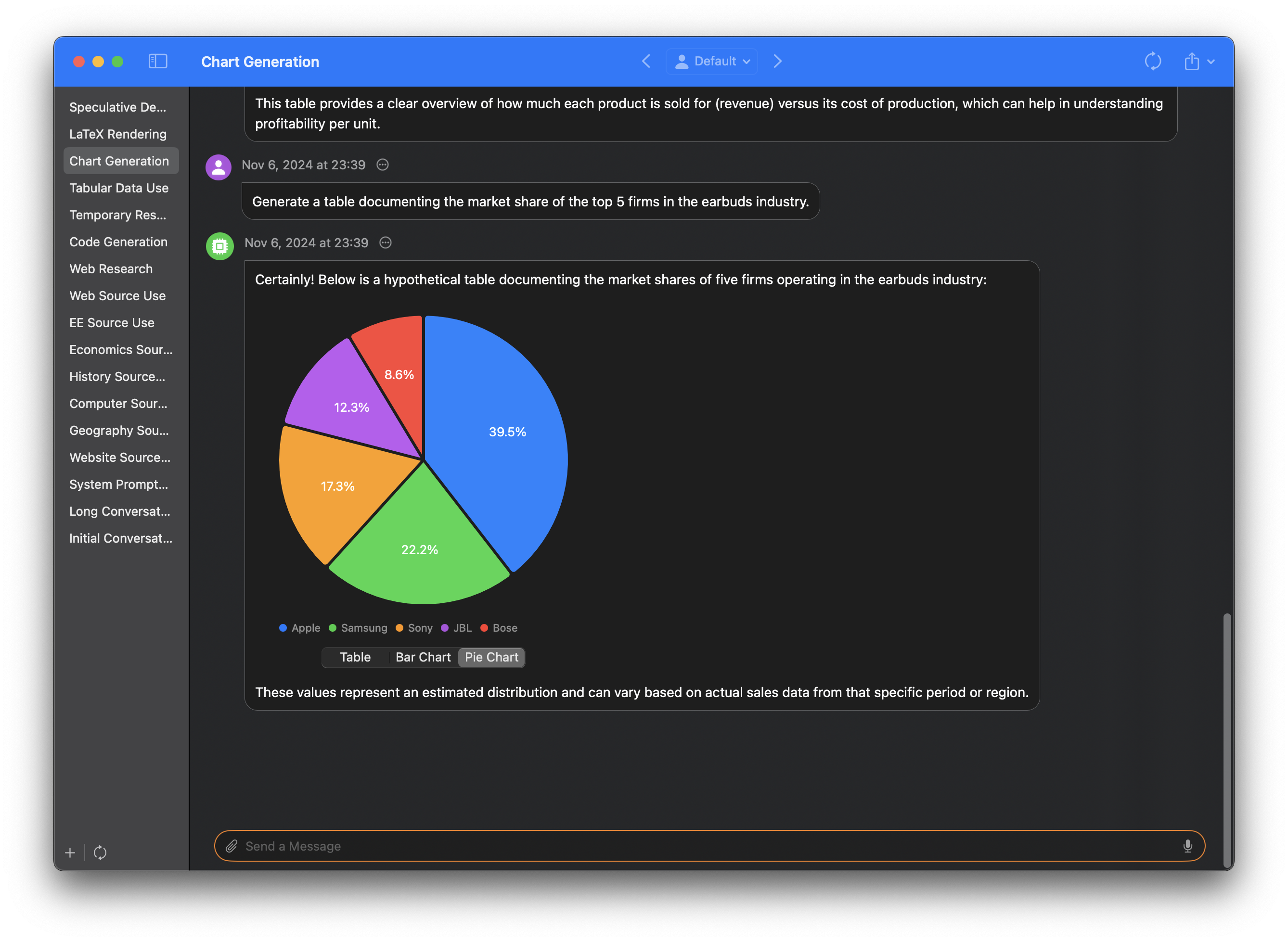

Visualizations are automatically generated for tables when appropriate, with a variety of charts available, including bar charts, line charts and pie charts.

Charts can be dragged and dropped into third party apps.

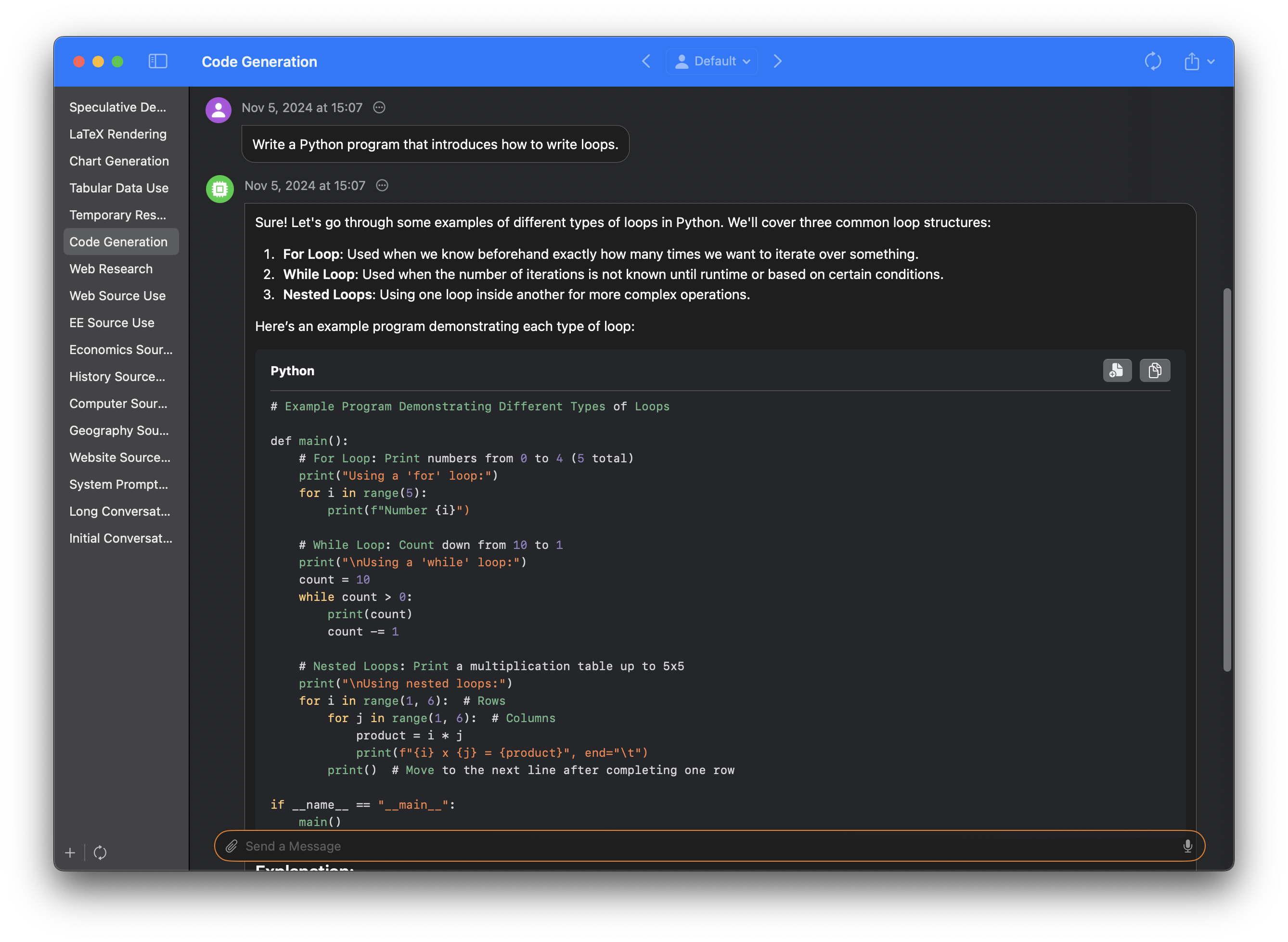

Code is beautifully rendered with syntax highlighting, and can be exported or copied at the click of a button.

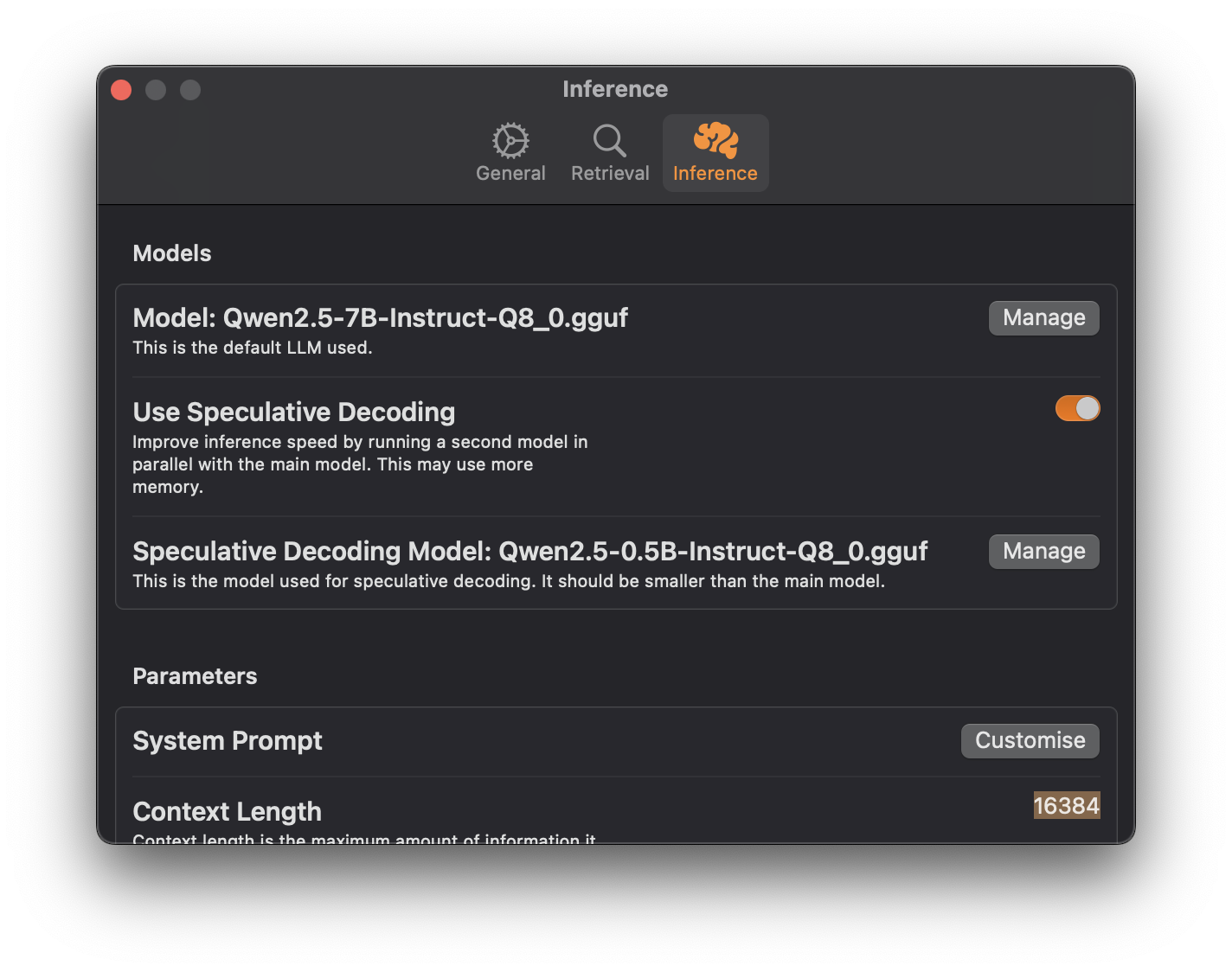

Sidekick uses llama.cpp as its inference backend, which is optimized to deliver lightning fast generation speeds on Apple Silicon. Sidekick also supports speculative decoding, which can increase performance by up to 51%.

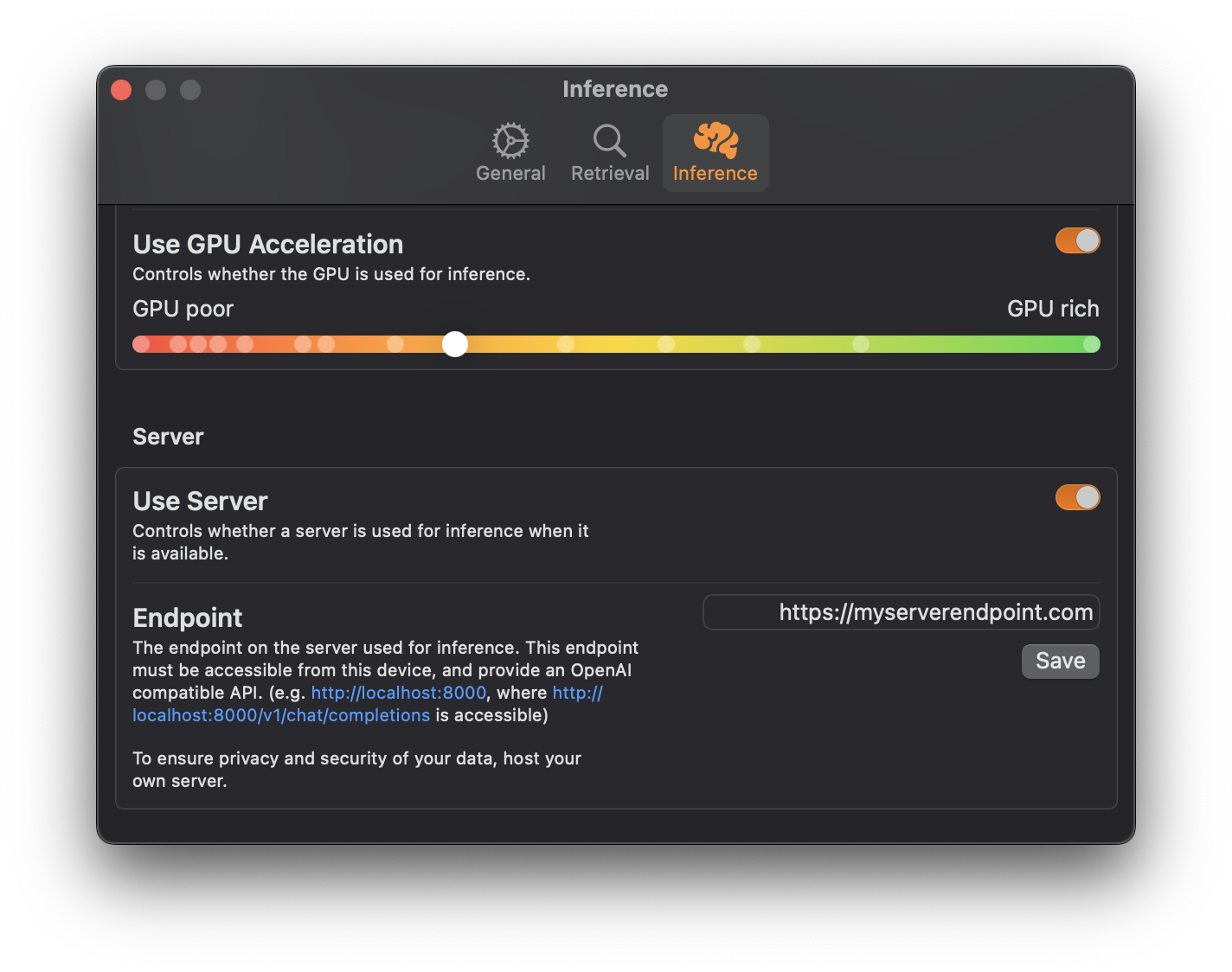

Optionally, offload generation to your desktop to speed up generation while extending the battery life of your MacBook.

Requirements

- A Mac with Apple Silicon

- RAM ≥ 8 GB

Prebuilt Package

- Download the packages from Releases, and open it. Note that since the package is not notarized, you will need to enable it in System Settings.

Build it yourself

- Download, open in Xcode, and build it.

The main goal of Sidekick is to make open, local, private models accessible to more people, and allow a local model to gain context of select files, folders and websites.

Sidekick is a native LLM application for macOS that runs completely locally. Download it and ask your LLM a question without doing any configuration. Give the LLM access to your folders, files and websites with just 1 click, allowing them to reply with context.

- No config. Usable by people who haven't heard of models, prompts, or LLMs.

- Performance and simplicity over developer experience or features. Notes not Word, Swift not Electron.

- Local first. Core functionality should not require an internet connection.

- No conversation tracking. Talk about whatever you want with Sidekick, just like Notes.

- Open source. What's the point of running local AI if you can't audit that it's actually running locally?

- Context aware. Aware of your files, folders and content on the web.

Contributions are very welcome. Let's make Sidekick simple and powerful.

Contact this repository's owner at [email protected], or file an issue.

This project would not be possible without the hard work of: