This repository is an official implementation of MOTRv2.

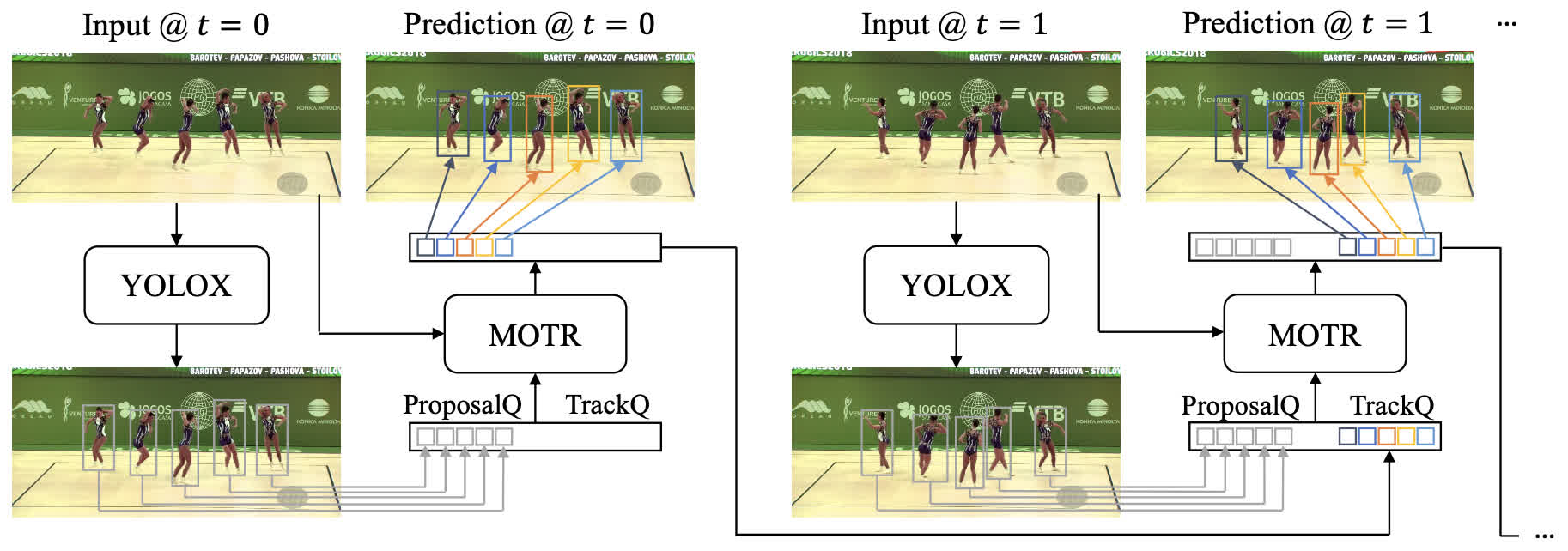

TL; DR. MOTRv2 improve MOTR by utilizing YOLOX to provide detection prior.

Abstract. In this paper, we propose MOTRv2, a simple yet effective pipeline to bootstrap end-to-end multi-object tracking with a pretrained object detector. Existing end-to-end methods, e.g. MOTR and TrackFormer are inferior to their tracking-by-detection counterparts mainly due to their poor detection performance. We aim to improve MOTR by elegantly incorporating an extra object detector. We first adopt the anchor formulation of queries and then use an extra object detector to generate proposals as anchors, providing detection prior to MOTR. The simple modification greatly eases the conflict between joint learning detection and association tasks in MOTR. MOTRv2 keeps the end-to-end feature and scales well on large-scale benchmarks. MOTRv2 achieves the top performance (73.4% HOTA) among all existing methods on the DanceTrack dataset. Moreover, MOTRv2 reaches state-of-the-art performance on the BDD100K dataset. We hope this simple and effective pipeline can provide some new insights to the end-to-end MOT community.

- 2023.02.28 MOTRv2 is accepted to CVPR 2023.

- 2022.11.18 MOTRv2 paper is available on arxiv.

- 2022.10.27 Our DanceTrack challenge tech report is released [arxiv] [ECCVW Challenge].

- 2022.10.05 MOTRv2 achieved the 1st place in the 1st Multiple People Tracking in Group Dance Challenge.

| HOTA | DetA | AssA | MOTA | IDF1 | URL |

|---|---|---|---|---|---|

| 69.9 | 83.0 | 59.0 | 91.9 | 71.7 | model |

| SORT-like SoTA | MOTRv2 |

|---|---|

|

|

|

|

|

|

The codebase is built on top of Deformable DETR and MOTR.

-

Install pytorch using conda (optional)

conda create -n motrv2 python=3.7 conda activate motrv2 conda install pytorch=1.8.1 torchvision=0.9.1 cudatoolkit=10.2 -c pytorch

-

Other requirements

pip install -r requirements.txt

-

Build MultiScaleDeformableAttention

cd ./models/ops sh ./make.sh

- Download YOLOX detection from here.

- Please download DanceTrack and CrowdHuman and unzip them as follows:

/data/Dataset/mot

├── crowdhuman

│ ├── annotation_train.odgt

│ ├── annotation_trainval.odgt

│ ├── annotation_val.odgt

│ └── Images

├── DanceTrack

│ ├── test

│ ├── train

│ └── val

├── det_db_motrv2.json

You may use the following command for generating crowdhuman trainval annotation:

cat annotation_train.odgt annotation_val.odgt > annotation_trainval.odgtYou may download the coco pretrained weight from Deformable DETR (+ iterative bounding box refinement), and modify the --pretrained argument to the path of the weight. Then training MOTR on 8 GPUs as following:

./tools/train.sh configs/motrv2.args# run a simple inference on our pretrained weights

./tools/simple_inference.sh ./motrv2_dancetrack.pth

# Or evaluate an experiment run

# ./tools/eval.sh exps/motrv2/run1

# then zip the results

zip motrv2.zip tracker/ -r